Altered and automated engagement with beliefs

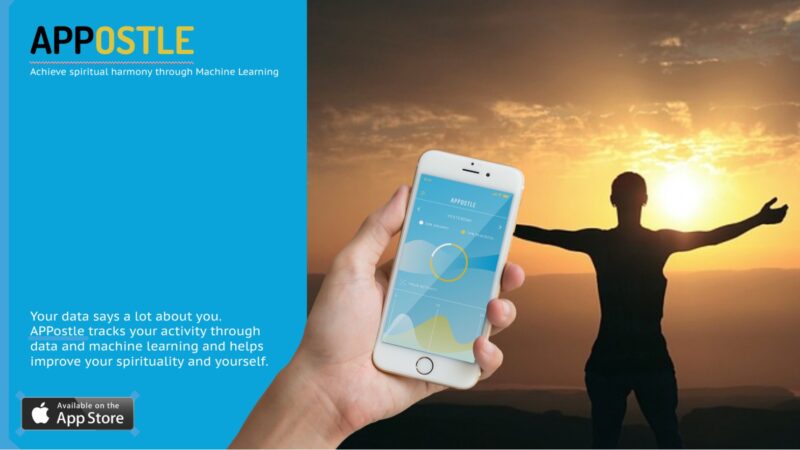

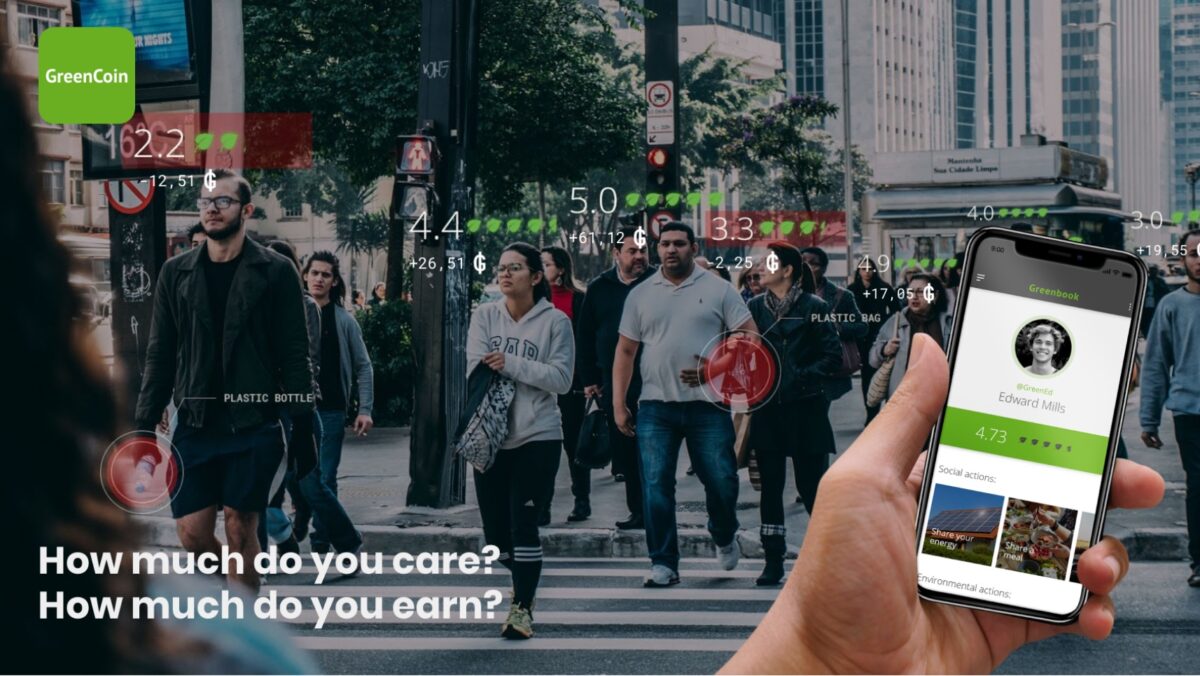

In the future, we foresee a possible advancement of AI with the ability to codify and model the highly complex ethical parameters of everyday life. Services could evolve to understand, translate and guide people through the implied ethical components of decisions —ranging from who to vote for, who to pray for, what products to buy or abstain from, which organisations to support, how to raise a child or educate them and who to follow. With this technological capacity, we envisage a politicisation of many organisations and activities, and an ability to track behaviours and allocate people to distinct ethical categories, opening a type of transparency and clarity to people’s beliefs.

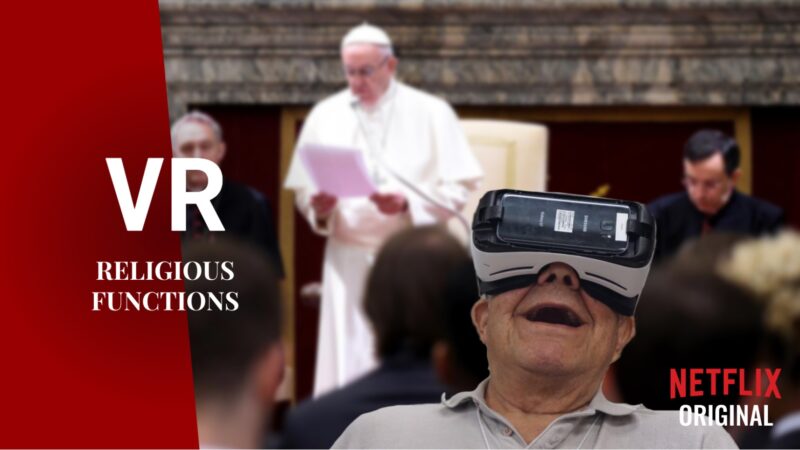

The complexity of adhering to ethical principles and making distinct choices often leads to people wanting to outsource these decisions. This is fundamentally not that new. People frequently default to other ethical structures such as religious leaders, familial beliefs, friendship groups, social media bubbles, journalists or role models etc. for guidance. In this context, what has the potential to expand is the ability to clearly select and integrate your own values with other adopted ethical structures and allow them to algorithmically integrate and guide you clearly through everyday decisions.