Mymes uses an understanding of people’s behaviour to create simulations of their future to help them make decisions .aIt distills different sides of their character to help them explore who they are.

What is the problem?

People sometimes struggle to make decisions that benefit their future and a lack of identity exploration can hinder people’s resilience.

The Mymes proposition responds to two overlapping problem areas. On the one hand, we see that people, particularly young people in significant transitionary moments of their lives, struggle to make complex decisions.

For instance,, we can see that lifestyle related decisions perhaps around food, stress, exercise, sleeping or smoking, drive a huge amount of the chronic health conditions faced in the United Kingdom On the surface, it’s possible to argue that the healthier options in these cases are simple to choose, i.e. ‘don’t smoke, eat healthily, sleep more’, but in reality people have to make complex assessments to do with money, work, finance or relationships,which can make these decisions difficult.

The other area of interest within this proposition is about the exploration of identity. Research suggests that when young people explore their identity i.e. they try out different models of identity, experimenting with how they can fit within the world and see themselves. They can often be more emotionally resilient later in life when something challenges the identity they had established. It is argued that as a consequence of having experimented with who they are, they become more capable of reforming their perceptions of themselves without the same level of emotional loss as somebody who had not experimented. Additionally, it could be argued that people have different identities during the same period. Someone may act, behave and feel completely different during different contexts even on the same day, therefore, it may not even be that people are experimenting with their identities one at a time. This proposition also considers how AI may be adopted to support people as they navigate the complexity of identity exploration.

How ‘Mymes’ responds

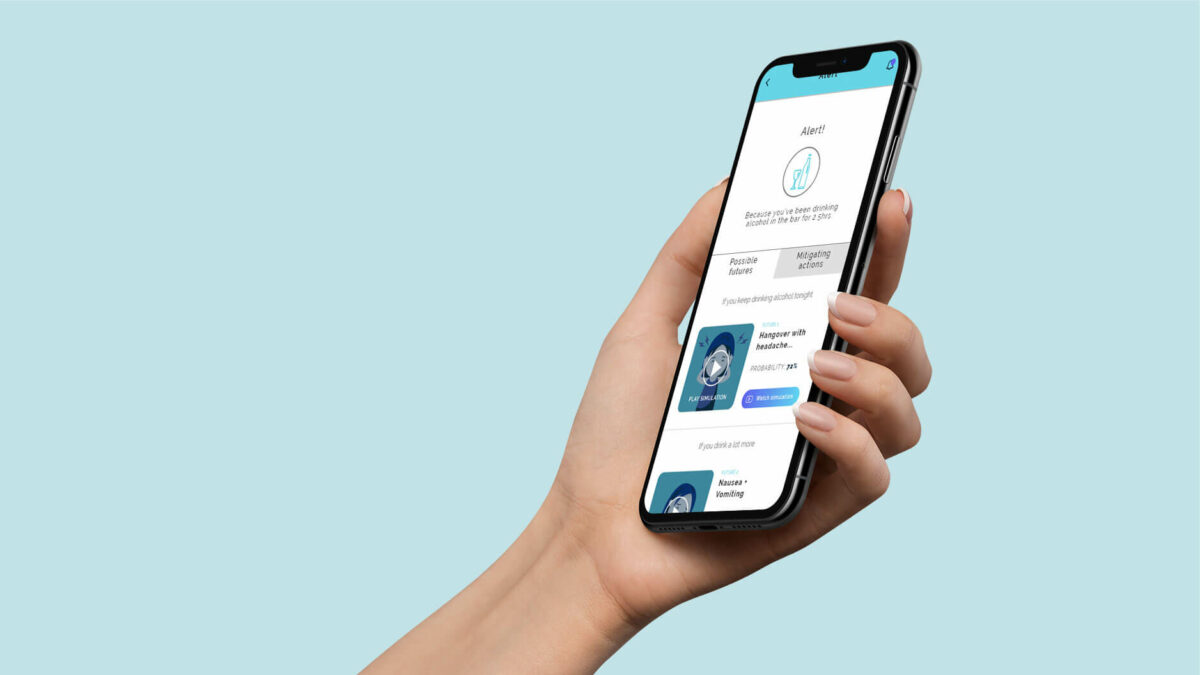

Mymes empowers users with high levels of personal data and insight. It uses this data to show people future versions of themselves, which are based on their choices in the present, enabling longer-term decision making. The service also helps people understand a nuanced, multi-faceted perspective of their identity, expanding people’s concept of themselves and encouraging self-exploration

Simulate the future:

Mymes creates a digital avatar of the user, simulating potential future outcomes of their decisions through AI. Initially, it would take in data from all of your other apps and support simple decisions, such as how to adjust your daily commute in ways that might save you money or uplift your mood or improve your health. But after a while,the service would start to develop its own intelligence about you and simulate more complex alterations to your life. It allows people to explore their potential future realities, helping them think about longer term implications and consider more options.

Monitor and track your life:

Mymes drives its simulations through the monitoring of any actions people allow it to monitor by connecting with other apps and devices. This data not only drives the simulations, but it also helps people understand their own patterns simply through giving them more visibility and transparency on their behaviours. Essentially, the service is trying to use the power of its data to help build a users’ agency through teaching them about themselves, and subsequently helping them make more informed decisions by themselves.

See different sides of yourself:

As Mymes learns more and more about people, it’s able to discern different modes of behaviour that happen at different times, in different cycles, in different places, at different events, under different conditions and with different people. It categorises these variations in people’s behaviour to form representations of different sides of them, so they can see the different modes of behaviour they adopt in different circumstances and with different people.

See how different versions of you behave:

These different categories can be explored by the user allowing them to see things such as their spending behaviour, the types of food they eat, and depending on the sensor data that Mymes is allowed to collect, it could even monitor some variations in emotional states. These different categories of self, could distinctly represent elements of people’s identity that are heightened in different contexts and help them discern a more varied and nuanced understanding of themselves, rather than trying to form a singular coherent concept. In doing so, Mymes creates paths to explore identity in new ways.

What we

learnt

We demonstrated a low fidelity prototype of Mymes to high-need users and this is what we learned:

- that many people appreciated that the problem Mymes was trying to tackle was real and that the general approach of the service was appropriate;

- most people were sceptical about how much they would use the service; and,

- there was a lot of disagreement among users about how the strategies could be refocused to improve it.

Emerging conversations from this proposition are about:

- the way that choices can be supported by technology without becoming invasive; and,

- how having multifaceted representations of oneself could practically be valuable in everyday life.

Only some choices should be supported

An interesting area of discussion that emerged with users was around what was an appropriate recommendation, or simulation to be given. The general approach of the service proposal was that it could be wide ranging and encompass large categories of your life, offering anything from health simulations to career simulations. The simulations were illustrated to give people a more engaging way to interact and understand the outcomes of their actions, but most users found these illustrations inappropriate for anything other than very practical choices.

There was wide disagreement among the group about how and when people wanted an intervention from the app. Some said that it could feel overbearing for the intervention to be regular. Some said that it would be best if it intervened regularly in small health choices that have larger long-term implications (like dietary choices for instance). Others felt that technological services like this could only help with decisions that are failed by absentmindedness, therefore the service could intervene to improve the decision.

This variety of opinion is evidence that services that become intertwined so intimately with people’s lives, either need to learn to be adept at responding to the users’ cues for assistance or they need to have active personalisation built in the service experience.

Categorising identity

People found the concept of categorising different aspects of themselves useful as a mental exercise. And for many of them, it helped them feel positive about themselves because, to them, it meant they had varied and complex characters. Many participants also talked about how the recollection of sides of themselves, which are now less active, was both nostalgic and powerful —because although that side of themselves was less active, they felt it still belonged to their identity and seeing or describing it enriched their current view of themselves.

Although most participants felt that categorising sides of themselves was meaningful and rewarding, they struggled to see how digital categorisation would be helpful in their lives beyond simply being a reminder or an archived part of themselves. The proposal essentially suggests that a divided conceptualisation of the self, might expand people’s self-understanding. And that reminding people of their potential to be multifaceted could encourage them to explore more. However, this relies on a large assumption that the categorisations would be sophisticated enough to be taken seriously and would inherently convey a message that, ‘people can be whoever they want to be’. To the participants engaging with the proposal, this was not a safe assumption and the feature was unappealing.

If we simply consider the fact people felt enriched and more powerful having recalled categories of their personality (without the strategy employed by Mymes), it is clear that it is a valuable component of people’s self-reflection, and therefore, perhaps there are still some interesting questions to be explored in this space with different concepts: Could reflecting on oneself through the lens of different personal identities help people feel more free to explore and act in ways outside of their current ‘preset character’? Could it help them understand themselves in more depth? Could it help people to act with more control?

Our new direction of exploration

If this proposition is taken further, the strategic question of relevance to our investigations is more along the lines of:

Can AI driven self-understanding benefit people in new ways by encouraging a categorised view of self-identity, and how can AI enriched decision-making interject in people’s lives in a non-invasive, supportive manner?

Related to ‘Mymes’

Scenarios

Identity Fluidity

Identity could grow more fluid as people gain more freedom to pursue the lifestyles of their choosing outside normative assumptions of race, nationality, sexuality, gender, age and more. More nuanced characteristics are supported by social media niches alongside AI that may raise self awareness to help you define what works for you.