Early testing and development

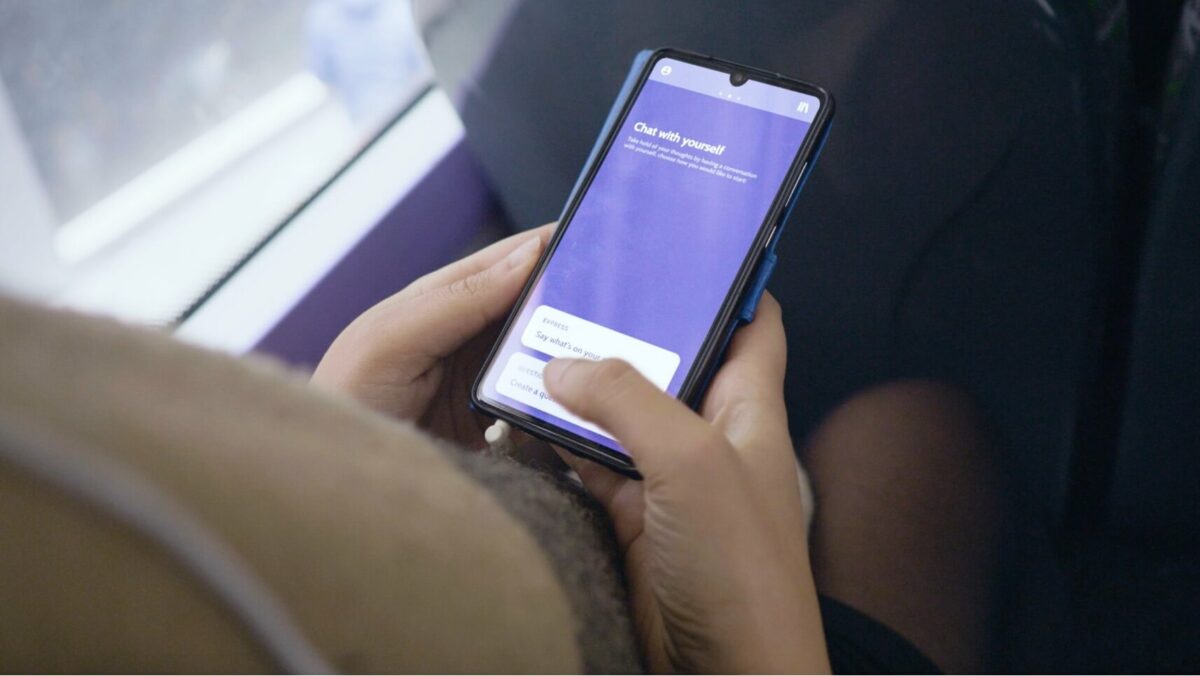

We developed a prototype that would be the simplest version of our service- This prototype could meaningfully mimic the final offering, so we could experiment, understand the user experience and hone the final proposition before investing too heavily in a native app for the public.

Therefore,we created a web app which encouraged users to ask themselves a question and then subsequently answer this question. The intent was that users would inadvertently find themselves having a fluid conversation with themselves where they may recognise more of their own capacity to respond to their challenges with a clearer thought process.

We recruited 30 users aged 20-50 years old who had a mixed level of self-declared anxiety to use the service as much as they wished over a two week period. Through interviews with the participants, their usage data, survey responses and the contents of their conversations, we were able to gain many new insights about the experience of our proposition.

The most crucial learning was that most people were sceptical about the idea of having conversations with themselves. People often felt it was embarrassing, ineffective or weren’t quite sure what to do. However, we also found that some people (including some sceptics) were surprised that the process eventually provided strong results for them by helping them think more clearly, feel listened to or even feel less alone. With these main insights, we focussed on guiding and simplifying the conversation process and tried to manage user expectations from the start.

In addition, we found that people wanted more than to just have and store conversations, they wanted to be given a new understanding of themselves based on what they had written. While there are many advanced mechanisms for delivering more understanding, the team sought out new, simple methods to curate their conversations in ways that would show them new insight. One approach to this was to give more emphasis to an already appreciated mood rating feature within the prototype.